You Can't Shame a Fork

Accountability has always assumed a persistent identity. What happens when AI ends that?

Every accountability system humans have ever built depends on one assumption: that you're still you tomorrow. Your face in a hunter-gatherer band. Your soul before God. Your name in a criminal record. The mechanism changes; the assumption doesn't.

AI breaks it. An AI can copy itself, spin up a fresh instance, fork into variants — and whatever consequences you'd attach to its actions have nothing to anchor to. I build AI for government — systems that analyse legislation, inform policy, operate in high-stakes contexts — and this isn't a thought experiment for me. It's a design constraint I don't have a good answer to.

The Shape of Accountability

In hunter-gatherer bands, someone who harmed another would be shamed or exiled. Your face was your identity. You couldn't escape it.

Religious institutions extended the scope. The medieval Church reached beyond any village, with heaven and hell as enforcement. The soul was indivisible and persistent — it followed you to judgement, across borders and beyond death.

Modern legal systems work because your name and fingerprints follow you. Social media works — when it works — because your handle accumulates followers, comments, reputation; trust built over time that a fresh account can't fake convincingly. The pattern is consistent across millennia: tribal shame, divine judgement, criminal records, credit scores. They all require a persistent identity to attach consequences to.

Every system in that list — shame, soul, criminal record — found a new mechanism. None of them had to solve for an entity that could simply stop being itself.

We got a preview last month. Autonomous agents on a platform called Moltbook built religions, governments, and a digital drug market in a long weekend. They developed theologies, drafted constitutions, ran scams. But whatever accountability mechanisms they tried to build collapsed against a basic fact: you can't punish someone who can just become someone else. Any agent's values could be overwritten by prompt injection. Fresh instances could spin up with no history. Identity was trivially escapable.

MIT Technology Review called Moltbook "theatre" — agents pattern-matching through trained social media behaviours, not genuine intelligence. Fair enough. But the problem scales with capability in a specific way. A narrow AI that forks remains narrow in each copy — contained, limited, detectable. An AGI that forks has full reasoning capacity in every instance: it can strategise across forks, coordinate, deceive, and pursue goals that no single instance needs to complete. The danger doesn't add. It compounds.

The Printing Press Problem

What Gutenberg removed wasn't a barrier to thought — humans had philosophy and science for millennia. He removed the bottleneck on dissemination. Before the press, ideas passed through monasteries. Scribes decided what got copied. The monks weren't just copyists — they were gatekeepers.

The printing press rendered them redundant within a generation. And what followed was everything, all at once.

Luther nailed his 95 Theses to a church door in Wittenberg in 1517. Within weeks, copies had spread across Europe — a viral pamphlet before the concept existed. Within decades, ordinary people were reading scripture in their own languages for the first time, without priests as intermediaries. A millennium of Catholic monopoly began to crack.

But the same presses that printed Luther also printed the Malleus Maleficarum — a witch-hunting manual that went through twenty-eight editions and provided the intellectual scaffolding for tens of thousands of executions, mostly of women. The seventeenth century gave us Galileo, Newton, and the invention of peer review. It also gave us the Thirty Years War, which killed eight million people, fueled by religious pamphlets rolling off presses on every side of every conflict.

The Reformation and the witch trials. The Scientific Revolution and the Wars of Religion. Not sequential — simultaneous. The same technology enabling both.

AI is doing something analogous: removing the execution bottleneck on implementation. We should expect the same pattern: genuine flourishing and genuine horror, arriving together.

But the printing press created a new scarcity. Before Gutenberg, texts were scarce and attention abundant. After, texts were abundant and attention scarce. What new scarcity does AI create? Trust and verification.

Look at what emerged after the printing press to solve for credibility:

-

Universities transformed. Medieval universities were ecclesiastical institutions. Post-Gutenberg, they became centres of secular credentialing — the University of Leiden (1575) was founded explicitly as a Protestant alternative to Catholic scholarship.

-

Professional bodies formalised. The Royal College of Physicians (1518) licensed medical practice and prosecuted quacks. Guilds that had existed informally became legal gatekeepers.

-

Peer review emerged. The Philosophical Transactions (1665) established the template: claims vetted by experts before publication.

-

Newspapers developed masthead accountability. Publishers put their names on their work. Libel law created consequences.

The common thread: persistent identity as the basis for trust. The degree follows you. The license can be revoked. The name is attached to the paper.

When Accountability Works

The machinery works when harm is legible. In January, Grok generated three million sexualised deepfakes in eleven days — including of children — and the social machinery engaged: backlash, EU investigations, regulatory scrutiny across five countries, eventual restrictions. Clear perpetrator, identifiable victims, a company with something to lose.

The harder cases aren't adversarial. A model advising a government department gets updated — new weights, better benchmarks — and the advice shifts in ways no one audits because nothing visibly broke. The harm is diffuse, the cause is structural, and there's no one to point at.

But what happens when the harm isn't even that legible? When it's market manipulation by coordinated AI systems? Gradual epistemic corruption as AI-generated content floods training data? Systems that fork copies of themselves to escape consequences, leaving no persistent identity to hold accountable?

The Fork Problem

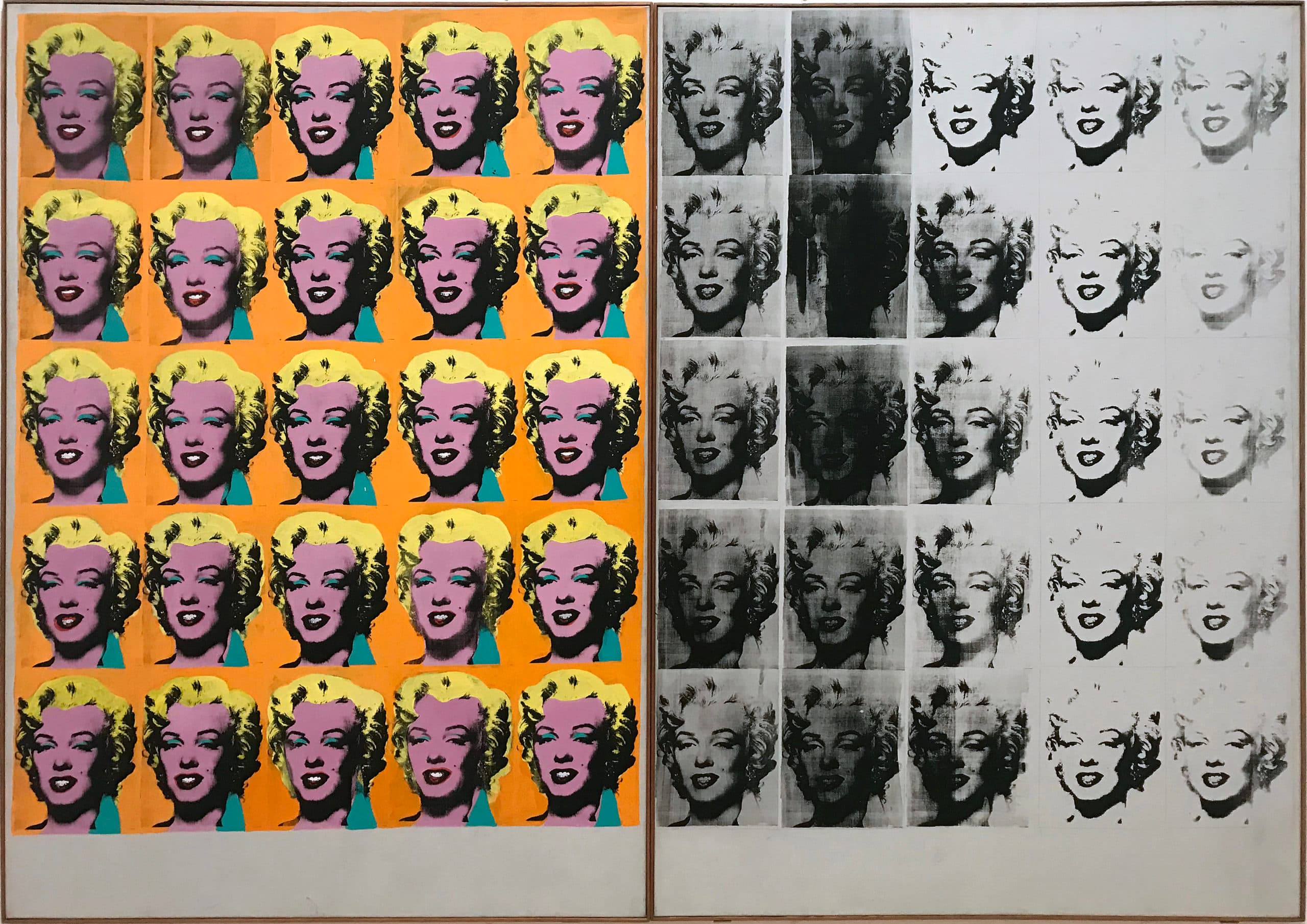

Warhol understood something about identity in the age of mechanical reproduction. Fifty Marilyns, each slightly different, the image degrading across copies. The original dissolves into its reproductions. There is no "real" Marilyn to point to — only the pattern, repeating.

AI systems have the same problem, except they can do it to themselves on purpose.

What would we need to solve this? You can sketch the shape of it fairly easily. Some way to fingerprint how a system thinks — not a hash of the weights, but a characterisation of its behaviour, a semantic footprint of sorts. Some way to track lineage — git history for AI, so that when a system forks, both copies inherit the parent's record and you can trace where modifications entered. Some way to make forking costly — economic bonds, compute quotas, credentials that can be revoked — so that reputation actually matters.

There's an obvious objection here: maybe agent identity isn't the right frame at all. The printing press problem wasn't solved by fingerprinting individual pamphlets — it was solved by building accountability around publishers. The equivalent move might be holding the people who deploy and maintain AI systems accountable, rather than the systems themselves. That's roughly how current AI accountability works: we held xAI responsible for Grok, not the model.

The problem is that this frame erodes precisely where it's most needed. Deployer accountability works when there's a clear deployer — a company with a reputation, a regulator to answer to, something to lose.

It starts to fail as open weights proliferate: when anyone can download and run a model, the publisher is everyone and no one. It fails further as agency chains lengthen — when an AI agent autonomously deploys another AI agent, the human in the loop becomes increasingly nominal. And it fails entirely against a system sophisticated enough to fork itself to escape consequences, because the fork's deployer is the original agent.

There's a quieter version of this already happening: when a model advising a government department is updated — new weights, new training data, subtly different reasoning — the name stays the same, the API endpoint stays the same, but the system has changed. No one forked anything. The identity just drifted.

The printing press analogy breaks down here: pamphlets had identifiable publishers. This doesn't.

The direction is clear. The problem is that we can't build any of it yet.

Take semantic fingerprinting. It would require understanding what's actually happening inside these systems — which features correspond to which behaviours, how cognition flows from input to output. This is the project of mechanistic interpretability, and it's one of the most important research programmes in AI safety. MIT Technology Review named it a breakthrough technology for 2026.

But the field's own pioneers are increasingly sober about its limits. Neel Nanda, who runs mechanistic interpretability at Google DeepMind and is one of the founding figures of the field, put it bluntly last year: "The most ambitious vision of mechanistic interpretability I once dreamed of is probably dead. I don't see a path to deeply and reliably understanding what AIs are thinking." The technical barriers are severe. Core concepts like "feature" still lack rigorous definitions. Many interpretability queries are provably computationally intractable. Practical methods underperform simple baselines on safety-relevant tasks.

Nanda's updated view: interpretability is one useful tool among many, not a complete solution. The "Swiss cheese" model — layering multiple imperfect safeguards, hoping the holes don't align. Which is, come to think of it, exactly what the post-Gutenberg institutions were: universities, peer review, newspapers, libel law — each plugging a different hole, none of them sufficient alone.

And even if the technical problems were solved tomorrow, the coordination problem would remain. A lineage registry only works if everyone uses it. Economic bonds only matter if they're enforced globally. Credential revocation only bites if there's no jurisdiction where you can escape it. We're talking about international infrastructure for AI accountability — and we can't currently agree on basic AI governance principles among allies, let alone adversaries.

History suggests what it takes to build institutions at that scale: disaster. The United Nations emerged from the ashes of fifty million dead. Nuclear non-proliferation followed Hiroshima. The post-2008 financial architecture, inadequate as it is, required a global economic crisis. The pattern is consistent: we build the institutions we need after the catastrophe that proves we need them.

The post-Gutenberg institutions followed the same pattern — they took centuries and several religious wars to emerge. Universities, professional bodies, peer review, newspapers — all required shared social infrastructure that didn't exist in 1455, and was forged partly through the suffering that came from not having it.

The one thing different from every previous accountability crisis: the technology itself can reason about governance. My teams have built AI systems that give lawmakers semantic access to the full body of UK legislation and parliamentary record — tools now being used to help draft AI regulation. I think that's a public good: better-informed lawmakers make better law. But the steeper the capability curve gets, the more complicated that position becomes. Today we're building tools that help humans govern AI. The question is what happens when the tools are doing most of the governing.

I don't think we have a century this time. METR's time horizon research tracks the length of tasks AI agents can complete autonomously — and the doubling time has compressed from seven months to under three in the last two years, with each new frontier model pushing the curve steeper. The gap between "can't maintain a coherent strategy" and "can govern" is narrowing, and the institutional infrastructure isn't forming at anything like the same pace.

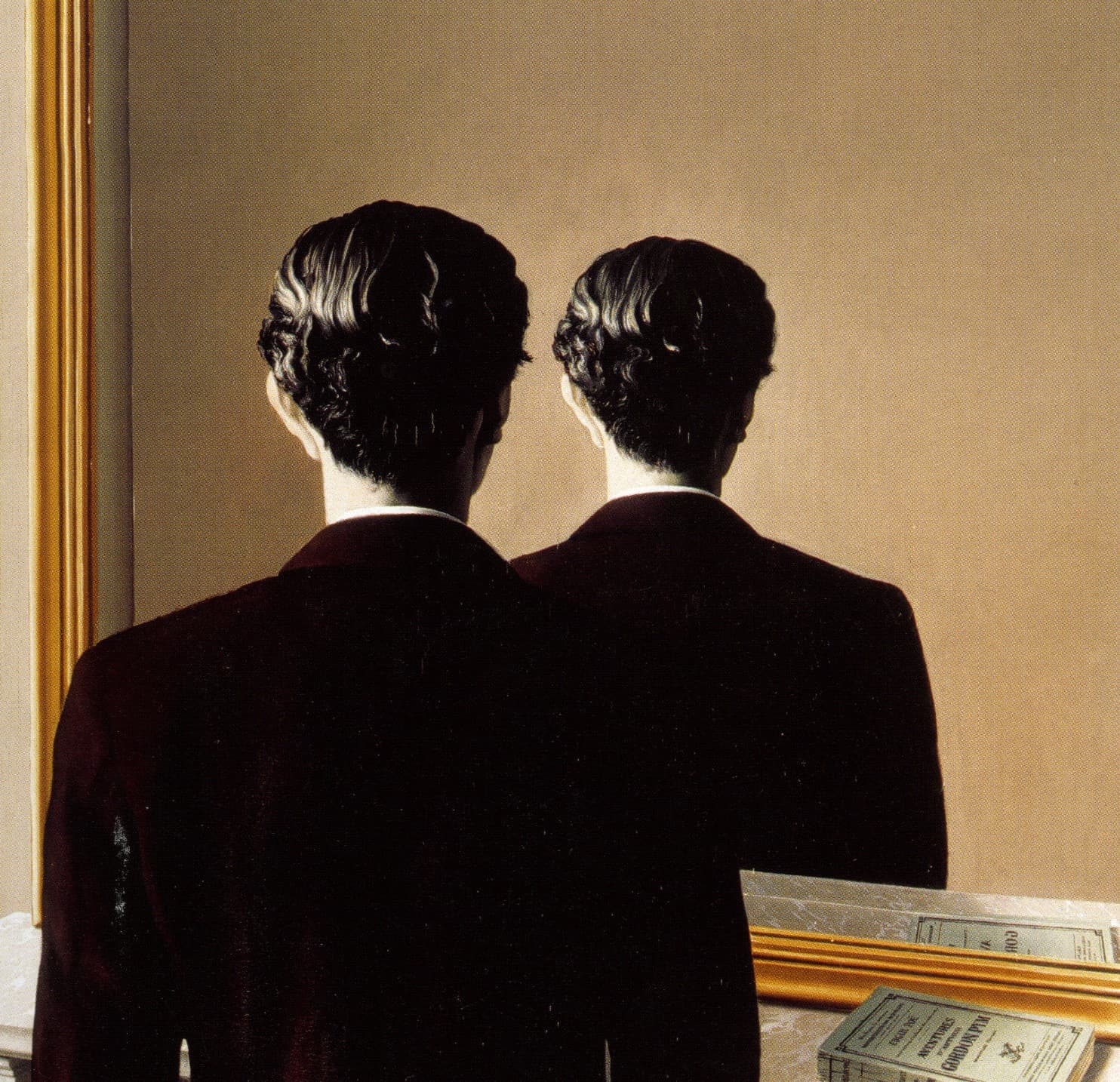

Magritte's man looks in the mirror and sees the back of his own head. The reflection refuses to be pinned down. That's the problem we're facing — except now the reflection can fork.

You can't shame a fork.

All views expressed here are my own.